|

9/26/2023 0 Comments Geometric deep learning

PyTorch Geometric is a geometric deep learning extension library for PyTorch. Finally, spectral methods are limited to undirected graphs whereas spatial methods can handle a bigger variety of graphs such as edge inputs, directed graphs, signed graphs and heterogeneous graphs because of the flexibility of the aggregation function Introduction by example: GCN implemented in PyTorch Geometric Spatial models perform graph convolutions locally on each node, which allows for weight sharing across different structures and locations. This is because any perturbation to a graph results in a change of eigenbasis. Moreover, spectral models assume a fixed graph and because they rely on a graph Fourier basis they generalize poorly to new graphs. The computation can be performed in a batch of nodes instead of the whole graph. Spatial models are more scalable to large graphs as they directly perform convolutions in the graph domain via information propagation (i.e. Spectral models are less efficient than spatial models as they need to perform eigendecomposition or handle the whole graph at the same time (e.g. Spatial models are preferred over spectral models due to efficiency, generality, and flexibility issues.

The intuition about the spatial graph convolutions is that this operation propagates and updates node features along edges. Similarly, spatial methods convolve a given node’s features, using a patch operator, with its neighbors’ features. A filter would be applied on the patch of the image including the pixel and its neighboring nodes. Images can be considered a special form of a graph with each pixel representing a node, connected to each neighboring pixels. Spatial methods define graph convolutions based on a node’s spatial relations, which is analogous to the convolution operation on the classical CNN. The spatial convolution is considered a more versatile method for learning on non-Euclidean structures.įigure 1: 2D Convolution vs. Spatial methods are preferred over the spectral methods for a number of reasons.

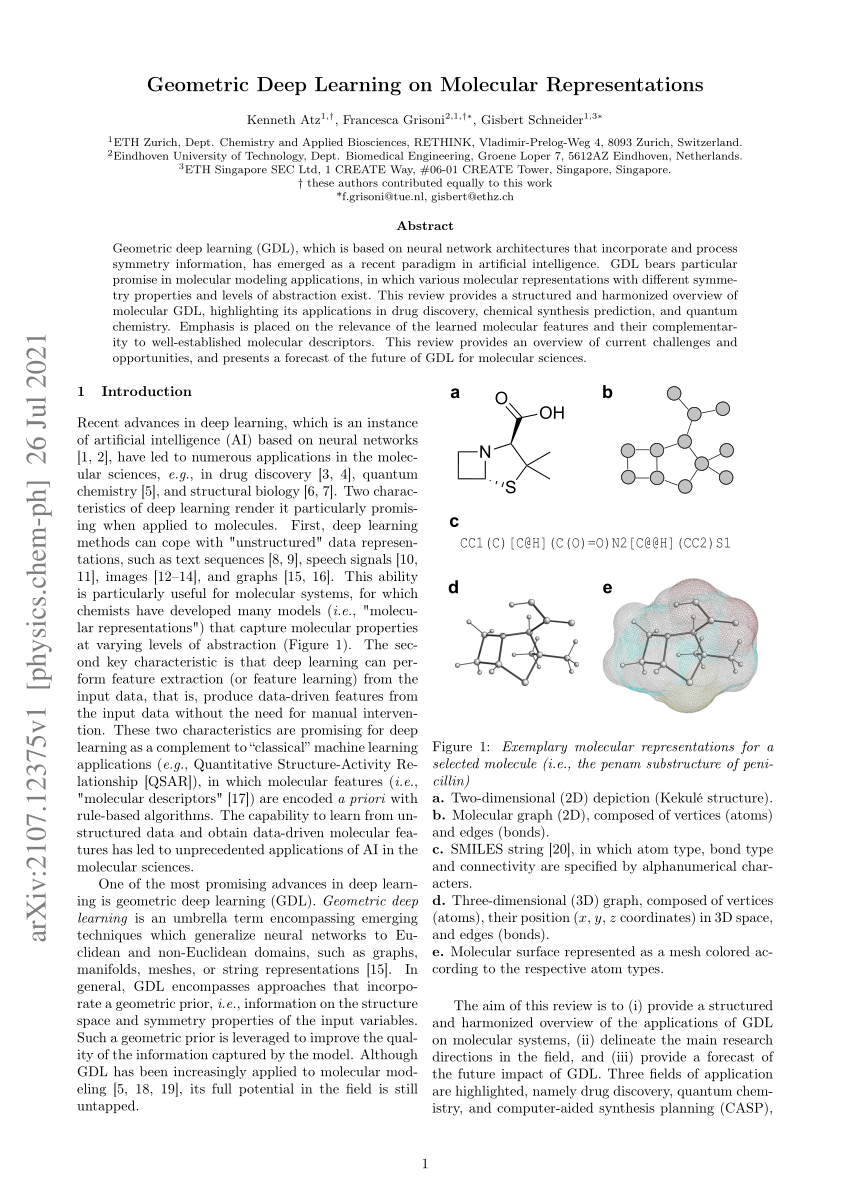

The concept of graph convolution is explained more in-depth in here. This property implies operating on the spectrum of the graph weights, given by the eigenvectors of the graph Laplacian. Given a graph, one way to generalize a convolutional architecture is to look at linear operators that commute with the graph Laplacian. The Fourier basis is used to compute spectral convolution in the classical signal processing (Read this for an in-depth explanation of Convolution theorem). Spectral graph convolution draws inspiration from the Euclidean convolution in a spectral domain. In practice, they are rarely used because they are computationally inefficient and don’t generalize well to different domains. Spectral methods were the first approach to generalize convolution operation to non-Euclidean domains. A discrete manifold has vertices uniformly sampled from the surface of the manifold with edges expressing the local structure of the shape. In computer graphics, shapes are represented as discrete 2-dimensional manifolds. A manifold is a is a locally Euclidean space. It captures interactions (edges) between individual units (nodes). A graph G is a pair (V,E) with the finite set of vertices V and edges E. To address this, usually the aggregation function is constructed to be invariant to neighbourhood permutations. A crucial difference from traditional neural networks operating on grid-structured data is the absence of canonical ordering of the nodes in a graph. Their hidden representations by aggregating information they collect from their neighbours. Most GNN architectures are based on message passing (spatial methods), where at each layer the nodes update It can describe various concepts ranging from a social network to a chemical compound. An example of such a structure is a graph. GDL owes its success to the fact that it operates directly on the relational structure of a given problem. This novel field in the world of machine learning was successfully used for building recommender systems, protein function prediction, fake news detection, and detection of cancer-beating molecules in food. , has emerged aiming to generalize deep learning models to non-Euclidean domains. Geometric deep learning (GDL), a term first proposed by Bronstein et al. The first post is an overview of Geometric Deep Learning. My work is focused on applying Geometric Deep Learning methods for shape analysis in the medical setting. I decided to write a series of articles to cover the things I’ve learned while working on my MEng thesis. Introduction by example: GCN implemented in PyTorch Geometric.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed